LLM use is getting widely popular and everyone and their dog is using LLMs, that is largely a good thing. LLMs can be incredibly useful and time saving tools that help people write better, work faster, and accomplish tasks that would otherwise take hours. When used thoughtfully, AI can be a powerful assistant for writers, researchers, and creators.

However, there's a darker side to this AI revolution that we need to talk about.

People in publishing and creative space are widely using AI in their work and it makes sense, why spent 100s of hours on a human to write a blog when the AI can deliver the same in just seconds. There are studies↗ that show that at minimum 50%↗ of the content on the internet is AI generated. You go anywhere on the internet and now you are guaranteed to see AI written content. If the content is not AI generated you are still bound to work out through AI overviews and summaries that are often wrong (though this seems to be improving lately).

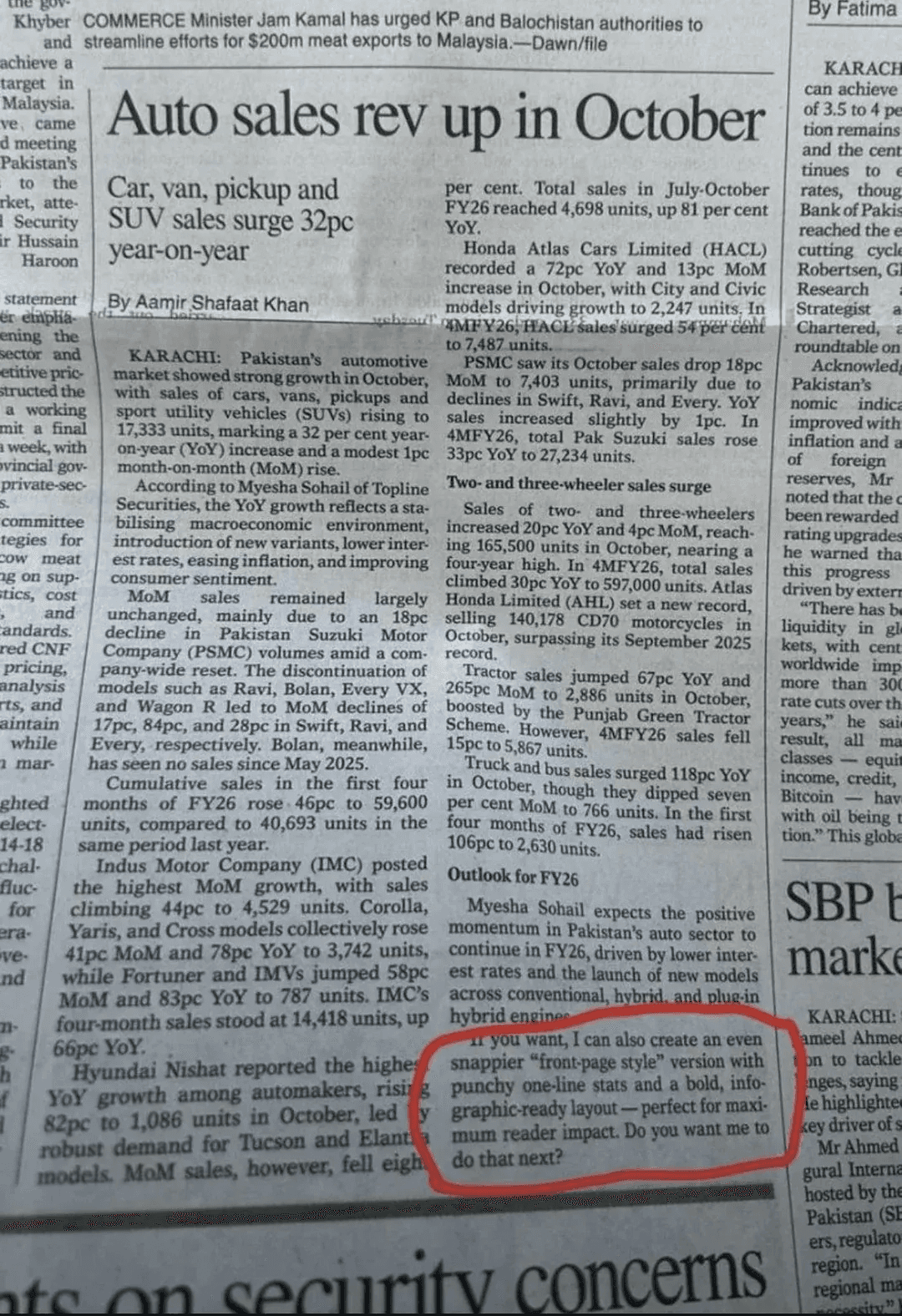

Not all AI-generated content is bad—that's important to acknowledge. My problem is with the low effort content that is generated by AI, with no thought and effort put into it. People don’t even read what’s being generated once, they just hit generate and publish. Just look at this article in Pakistan’s newspaper Dawn, which appears to contain obvious AI response that wasn't even reviewed before publication.

Motivations

When we look at this phenomenon from the perspective of those creating AI slop, their motivations become clear—and unfortunately, they make economic sense. Content creators can generate massive amounts of articles to populate their sites with minimal effort. Then they place advertisements on these sites. Even if individual articles get relatively few views, the sheer volume of content multiplied by some traffic results in meaningful ad revenue. It's a numbers game, and AI has made it incredibly easy to play.

One other motivation is propaganda or opinion shaping, people can use AI to generate large amounts of content that promotes a particular political party or a narrative. This can be done in a scale and speed which is impossible for humans. It’s now possible to have a completely fake video made where two people are having debate over a completely fake narrative. There are many such examples available, granted they don’t get many views but they still have the potential to change opinion of the few people that will watch them.

The Feedback Loop

Here's where things get truly concerning. LLMs are trained on data from the internet—yes, even Reddit comments make the cut. This training data used to be mainly human-generated. It contained blogs, articles, and thoughtful content that people actually wrote. But now, as the amount of AI slop increases exponentially, this content is inevitably making its way into the training sets for new LLMs.

We're creating a feedback loop where AI is being trained on AI-generated data. This isn't necessarily bad in the traditional sense—the practice of training AI models on synthetic or AI-generated data is actually quite common in other domains. However, in those cases, the synthetic data is highly moderated, carefully curated, and thoroughly checked for quality and accuracy. What we're seeing now is different: massive amounts of uncurated, low-quality AI output being fed back into future models.

The Practical Impact

Dead Internet theory seems more plausible than ever. This theory argues that most of the traffic on the internet is bot or non-human.

Lots and lots of low quality content makes finding good information incredibly difficult, search engines are getting worse, the general quality of information available is getting worse, and it now takes more time to sort through good and quality information.

There must be time when you were assigned some documents from a coworker that on its surface looks good but when you look into it, you questions yourself “what is the point of this, did he used AI to generate this?”. This is called as workslop, this is basically low effort, passable looking work that creates more work for the coworkers. I have personally experienced this more times than I like to admit. The main one is code that “looks right” but fails in production, as people blindly trust AI-generated code, many organizations are seeing an increase in technical debt.

What we can do?

The problem of AI slop is not going to decrease-AI tools keep getting easier to use over time and more accessible.

For Consumers:

- Develop critical thinking skills to identify AI generated content.

- Don't trust everything you see on the internet, always verify sources and never trust blindly.

- Support creators who put in effort into their work.

For Creators:

This video↗ by Kurgesagt is such a good watch and explains how AI slops hurt good creators.

- Use AI as a tool, don’t offload the thinking part to AI.

- Always review any AI generated content.

- Be transparent. If you are using AI, just mention it.

The internet has always been a mix of high and low-quality content. But we're now facing an unprecedented volume of low-quality material produced at machine speed. How we respond to this challenge will shape the future of online information for years to come.